Introduced: 2021; Domain: Artificial intelligence; Paradigm: large-scale self-supervised pretraining; Coined by: Stanford CRFM CRFM Foundation Models Report (2021).

Definition and Origin

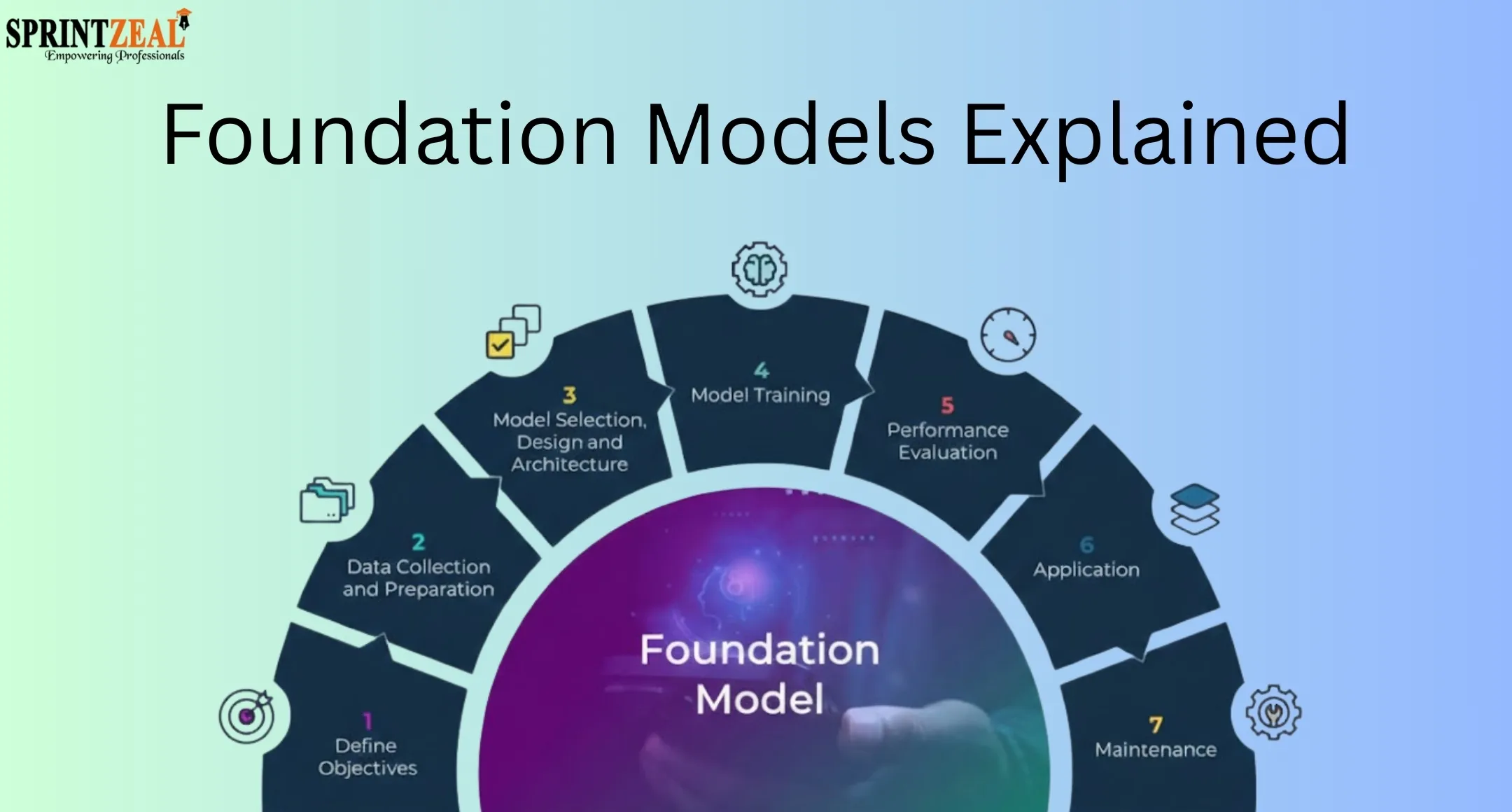

Foundation models are models trained on broad data—generally using self-supervision at scale—that can be adapted to a wide range of downstream tasks, a term introduced by Stanford’s Center for Research on Foundation Models in 2021 CRFM Foundation Models Report (2021)

CRFM Report Overview. The report emphasized two themes: emergence, where novel capabilities arise with scale, and homogenization, where a small set of models become substrates across applications

CRFM Foundation Models Report (2021)

Reflections on Foundation Models.

Technical Foundations

The Transformer architecture replaced recurrence and convolution with attention mechanisms, enabling efficient scaling that underpins modern foundation models Attention Is All You Need. Self-supervised objectives such as masked language modeling and next-token prediction made use of vast unlabeled corpora, as shown in BERT’s masked-language pretraining and later autoregressive LLMs

BERT

CRFM Foundation Models Report (2021). Scaling studies further documented predictable gains from increasing model and data size, while highlighting compute–data tradeoffs and the importance of compute‑optimal training (e.g., Chinchilla)

Training Compute‑Optimal Large Language Models (Chinchilla)

CRFM Foundation Models Report (2021).

Representative Models Across Modalities

- –NLP and Multimodal LLMs: GPT‑3 demonstrated strong few‑shot generalization from a 175B‑parameter autoregressive model

Language Models are Few-Shot Learners (GPT‑3). GPT‑4 introduced multimodality (text+image) with broad capabilities and evaluations reported in its technical report

GPT‑4 Technical Report. Google’s PaLM scaled dense transformers to 540B parameters under the Pathways system

PaLM: Scaling Language Modeling with Pathways. Meta’s LLaMA provided competitive open foundation language models across sizes

LLaMA: Open and Efficient Foundation Language Models.

- –Vision and Vision‑Language: CLIP learned aligned image‑text representations from large‑scale web pairs, enabling zero‑shot transfer across many tasks

CLIP: Learning Transferable Visual Models From Natural Language Supervision. DALL‑E 2 adopted a two‑stage pipeline with CLIP latents for high‑fidelity text‑to‑image generation

Hierarchical Text‑Conditional Image Generation with CLIP Latents (DALL‑E 2). Latent diffusion models achieved state‑of‑the‑art and efficient high‑resolution image synthesis

High‑Resolution Image Synthesis with Latent Diffusion Models.

- –Embodied AI and Robotics: RT‑2 integrated vision‑language pretraining with action policies, transferring web knowledge to robotic control

RT‑2: Vision‑Language‑Action Models Transfer Web Knowledge to Robotic Control. The CRFM report surveyed prospects for Robotics, emphasizing multimodality and data collection challenges

CRFM Foundation Models Report (2021).

Training and Adaptation Paradigms

Foundation models commonly train via Self‑Supervised Learning on web‑scale text, image‑text pairs, or other modalities, then adapt through fine‑tuning, prompting/in‑context learning, or instruction tuning. Reinforcement Learning from Human Feedback (RLHF) aligns model behavior with user intent and safety guidelines in interactive settings Training Language Models to Follow Instructions with Human Feedback (InstructGPT)

CRFM Foundation Models Report (2021). Compute‑ and data‑scaling insights inform model size, token budgets, and training efficiency

Training Compute‑Optimal Large Language Models (Chinchilla).

Applications

Foundation models serve as general substrates across domains. In NLP and Computer Vision, they support classification, retrieval, summarization, translation, and generation through zero/few‑shot transfer or lightweight adaptation Language Models are Few-Shot Learners (GPT‑3)

CLIP: Learning Transferable Visual Models From Natural Language Supervision. In healthcare, generalist medical AI approaches propose cross‑task reasoning using shared models for clinical text and imaging

Foundation models for generalist medical artificial intelligence. Broader surveyed applications include law, education, and robotics

CRFM Foundation Models Report (2021).

Risks, Governance, and Societal Impact

The CRFM report catalogs risks spanning fairness, misuse, privacy, robustness, and ethics, emphasizing how homogenization can propagate shared flaws across the ecosystem CRFM Foundation Models Report (2021)

Reflections on Foundation Models. Environmental concerns include substantial energy and carbon costs of large‑scale training, motivating efficiency reporting and mitigation strategies

Energy and Policy Considerations for Deep Learning in NLP

CRFM Foundation Models Report (2021). Safety research addresses scalable oversight, robustness to distribution shifts, and evaluation of emergent behaviors, with human‑in‑the‑loop methods like RLHF forming part of current practice

CRFM Foundation Models Report (2021)

Training Language Models to Follow Instructions with Human Feedback (InstructGPT).

Historical Context and Trajectory

Pretraining shifted from a niche to a substrate for NLP with BERT and successors, marking a sociotechnical inflection later generalized to multimodal models CRFM Foundation Models Report (2021)

BERT. Subsequent scaling produced emergent few‑shot abilities (GPT‑3), multimodal reasoning (GPT‑4), and generalization across tasks and embodiments (CLIP, RT‑2)

Language Models are Few-Shot Learners (GPT‑3)

GPT‑4 Technical Report

CLIP: Learning Transferable Visual Models From Natural Language Supervision

RT‑2: Vision‑Language‑Action Models Transfer Web Knowledge to Robotic Control. Research continues on compute‑optimal scaling, data curation, interpretability, and robust evaluation to realize benefits while managing systemic risks

Training Compute‑Optimal Large Language Models (Chinchilla)

CRFM Foundation Models Report (2021).